1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

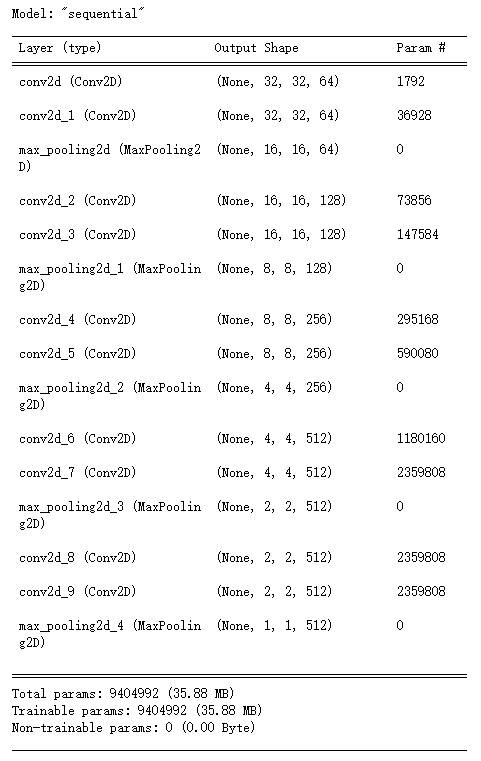

| conv_net = Sequential([

layers.Conv2D(64, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.Conv2D(64, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2,2], strides=2, padding="same"),

layers.Conv2D(128, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.Conv2D(128, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2,2], strides=2, padding="same"),

layers.Conv2D(256, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.Conv2D(256, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2,2], strides=2, padding="same"),

layers.Conv2D(512, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.Conv2D(512, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2,2], strides=2, padding="same"),

layers.Conv2D(512, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.Conv2D(512, kernel_size=[3,3], padding="same", activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2,2], strides=2, padding="same")

])

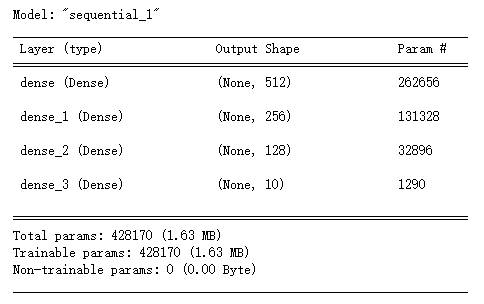

fc_net = Sequential([

layers.Dense(512, activation=tf.nn.relu),

layers.Dense(256, activation=tf.nn.relu),

layers.Dense(128, activation=tf.nn.relu),

layers.Dense(10, activation=None)

])

|